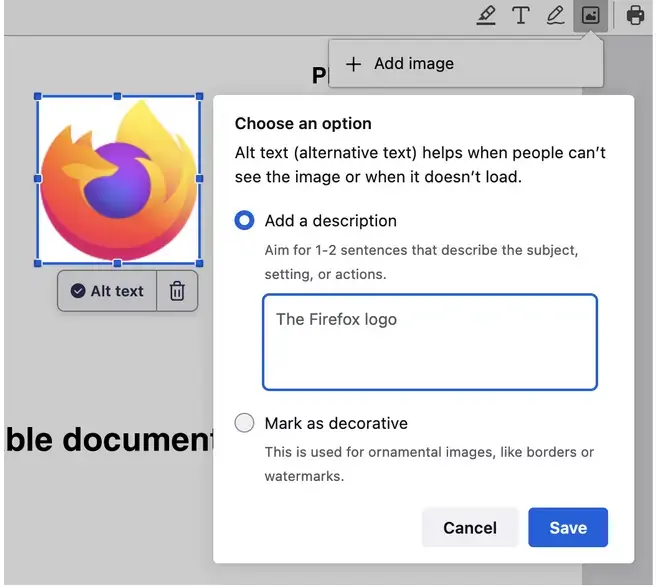

New accessibility feature coming to Firefox, an “AI powered” alt-text generator.

EDIT: the AI creates an initial description, which then receives crowdsourced additional context per-image to improve generated output. look for the “Example Output” heading in the article.

"Starting in Firefox 130, we will automatically generate an alt text and let the user validate it. So every time an image is added, we get an array of pixels we pass to the ML engine and a few seconds after, we get a string corresponding to a description of this image (see the code).

…

Our alt text generator is far from perfect, but we want to take an iterative approach and improve it in the open.

…

We are currently working on improving the image-to-text datasets and model with what we’ve described in this blog post…"

Overall see nothing wrong with this. Encourages users to support alt-text more, which we should be doing for our disabled friends anyway. I really like the confirmation before applying.

On the one hand, having an AI generated alt-text on the client side would be much better than not having any alt-text at all. On the other hand, the pessemist in me thinks that if it becomes widely available, website makers will feel less of a need to add proper alt-text to their content.

A more optimistic way of looking at it is that this tool makes people more interested in alt-text in general, meaning more tools are developed to make use of it, meaning more web devs bother with it in the first place (either using this tool or manually)

If they feel less need to add proper alt-text because peoples’ browsers are doing a better job anyway, I don’t see why that’s a problem. The end result is better alt text.

I don’t think they’re likely to do a better job than humans any time soon. We can hope that it won’t be extremely misleading too often.

I dunno, I suspect most human alt texts to be vague and non descriptive. I’m sure a human trying their hardest could out write an AI alt text… But I’d be pretty shocked if AI’s weren’t already better than the average alt text.

Alt text: It’s for SEO, isn’t it?

- Marketing

True, but if it genuinely works really well then does it really matter? Seems like the change would be a net positive.

Sounds like proton and linux gaming

The biggest problem with AI alt text is that it lacks the ability to determine and add in context, which is particularly important in social media image descriptions. But people adding useless alt text isn’t exactly a new thing either. If people treat this as a starting place for adding an alt text description and not a “click it and I don’t have to think about it” solution I’m massively in support of it.

They just need to gamify it. Have a “Verified Accurate Alt-Text Submissions” leaderboard or something.

I would expect it’d be not too hard to expand the context fed into the AI from just the pixels to including adjacent text as well. Multimodal AIs can accept both kinds of input. Might as well start with the basics though.

I like this approach of having a model locally and running it locally. I’ve been using the firefox website translator and its great. Handy and it doesn’t send my data to google. That I know of, ha.

Neat. I just hope it can be disabled to save power.

I hope this’ll be useful for me. I wonder how it compares to LLaVA?

There are way more companies who want to text-mine user content than there are blind people using the internet to read my content.

Babe another pointless Al just dropped

“I don’t need Alt text so it must be useless”

it’s not pointless; it’s amazing for accessibility, especially in pdfs.

Well I do agree it’ll be useful for people who need it, but for most people it’s pretty pointless and I hope at least they don’t enable it by default just like Windoze sticky key because ai use a lot of system resources for a little benefits especially with self hosted ai

beehaw is a safe-space, we shouldnt villify the experiences/needs of people who need alt-text. this could be game changing for people who need it.

Alternatively, it could be very frustrating for people who need it. Computer-generated translations are often very bad compared to human ones, and image recognition adds another layer of complexity that will very likely lack nuance. It could create a false sense of accessibility with bad alt-text, and could make it more difficult to spot real alt-text if it isn’t being tagged or labeled as AI generated

i don’t think we disagree in a vacuum but bringing that up in the context of this particular thread is probably unhelpful

This is actually one of the few cases where it makes sense. Its for alt-text for people who browse with TTS

Yeah, this is actually a pretty great application for AI. It’s local, privacy-preserving and genuinely useful for an underserved demographic.

One of the most wholesome and actually useful applications for LLMs/CLIP that I’ve seen.

Its for blind people, it let’s them know what is in images using a screen reader, just because it doesn’t apply to you doesn’t mean it’s useless

Think AI is pointless when it doesn’t apply to you?

One thing I’d love to see in Firefox is a way to offload the translation engine to my local ollama server. This way I can get much better translations but still have everything private.

Now i want this standalone in a commandline binary, take an image and give me a single phrase description (gut feeling says this already exists but depending on Teh Cloudz and OpenAI, not fully local on-device for non-GPU-powered computers)

Ollama + llava-llama3

You now just need a cli wrapper interact with the ollama api

So, it’s possible to build but no one has made it yet? Because i have negative interest in messing with that kinda tech, and would rather just “apt-get install whatever-image-describing-gizmo” so i wouldn’t be the one who does it

this is how i feel about basically all technology nowadays, it’s all so artificially limited by capitalism.

nothing fucking progresses unless someone figures out a way to monetize it or an autistic furry decides to revolutionize things in a weekend because they were bored and inventing god was almost stimulating enough

Skimming through it it wasn’t fully clear to me, is this just for their pdf editor?

It is for websites. This is most useful for readers that don’t display images. The feature for websites should be added for version 130. I’m on Developer Edition and I am currently on 127. It will be implemented for PDFs in the future after that.

Thanks for clarifying

But even for a simple static page there are certain types of information, like alternative text for images, that must be provided by the author to provide an understandable experience for people using assistive technology (as required by the spec)

I wonder if this includes websites that use

<figcaption>withaltemptied.When I used a similar feature in Ice Cubes (Mastodon app) it generated very detailed but ultimately useless text because it does not understand the point of the image and focuses on things that don’t matter. Could be better here but I doubt it. I prefer writing my own alt text but it’s better than nothing.